Agentic Analytics and the Future of Data Analytics in 2026

The role of a data analyst is not disappearing. It’s splitting in two.

1. The mechanical half — writing code, generating charts, building models from scratch — is being automated by AI-powered analytics tools that can do in minutes what used to take a day.

2. The human half — judgment, skepticism, communication, knowing what to do with the answers — is becoming more valuable than ever.

Execution can be quickly accomplished by AI. Humans control the judgment layer.

This isn’t speculation. It’s already happening. And there’s a name for the technology driving it: agentic analytics.

Agentic analytics is a data analysis approach where specialized AI agents autonomously plan, execute, and narrate multi-step analyses — replacing the manual loop of writing code, running it, reviewing results, and iterating. Unlike traditional BI tools or single-prompt AI chatbots, agentic analytics uses multiple AI agents that collaborate through a structured pipeline: one plans the analysis, another writes the code, another executes it, another checks for errors and self-corrects, and another synthesizes the findings into a narrative report.

I explore this in my YouTube series “AI Agents Did the Whole Thing”, where I hand real datasets to PlotStudio AI and let the agents do the entire analysis — without writing a single line of code:

- Data profiling

- Cleaning

- Statistical testing

- Predictive modeling

- Written reports

In Episode 1, I analyzed a telecom churn dataset and the agents:

- Identified that month-to-month contracts had a 42% churn rate

- Built a gradient boosting model

- Engineered a customer lifetime value feature on their own

- Produced a full report

We also touched on something important: when AI does most of the analysis, the analyst’s role doesn’t go away — it becomes more important.

The job was never to produce plots or build models. It was always to investigate, quality-check, and communicate insights that drive decisions. AI just removes the mechanical bottleneck so analysts can focus on the work that actually matters.

This article digs deeper into that idea.

What Tasks Will AI Automate for Data Analysts?

Two categories of work that analysts spend the majority of their time on are already being automated.

Generating Plots and Charts

For years, a significant chunk of an analyst’s day went to writing matplotlib, plotly, seaborn, or ggplot code to produce visualizations. Getting the axis labels right. Adjusting the color palette. Exporting at the right resolution for the slide deck. Rebuilding the same chart with a different filter because a stakeholder asked.

That work is no longer your value-add.

Analysts spend up to 80% of their time on data prep and visualization — work that agentic AI now handles in seconds. The question isn’t whether this work gets automated. It’s what you do with the time you get back.

AI-powered analytics tools generate publication-ready visualizations in seconds. You describe what you want to see — “show me revenue by region over time” — and the AI writes the code, runs it, and produces a clean, interactive chart with proper formatting, titles, and statistical context. No code required from you.

This was never the hard part of analysis. But it’s where analysts spent a disproportionate amount of their time. That time is now freed up — the question is what you do with it.

Model Building

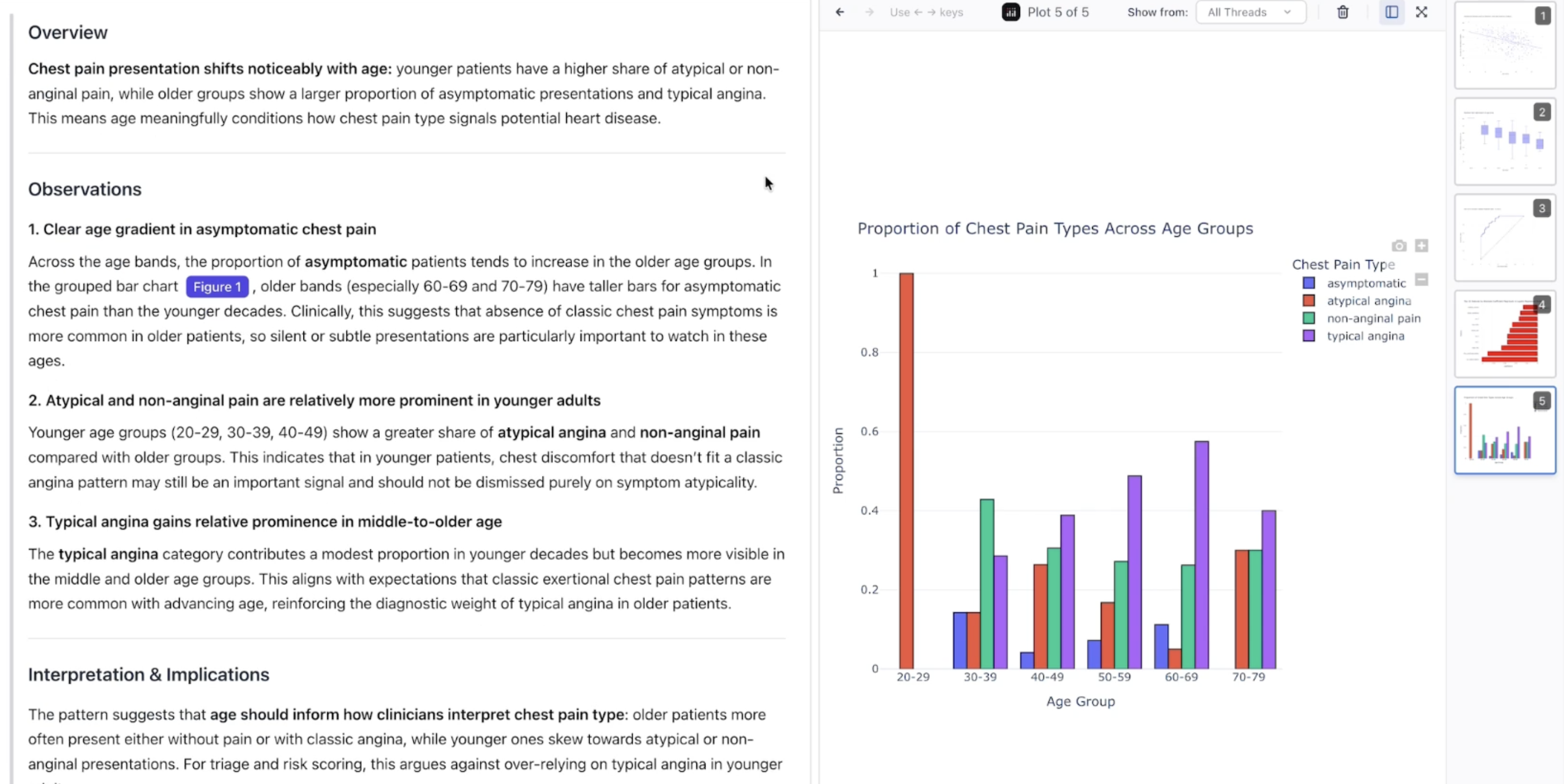

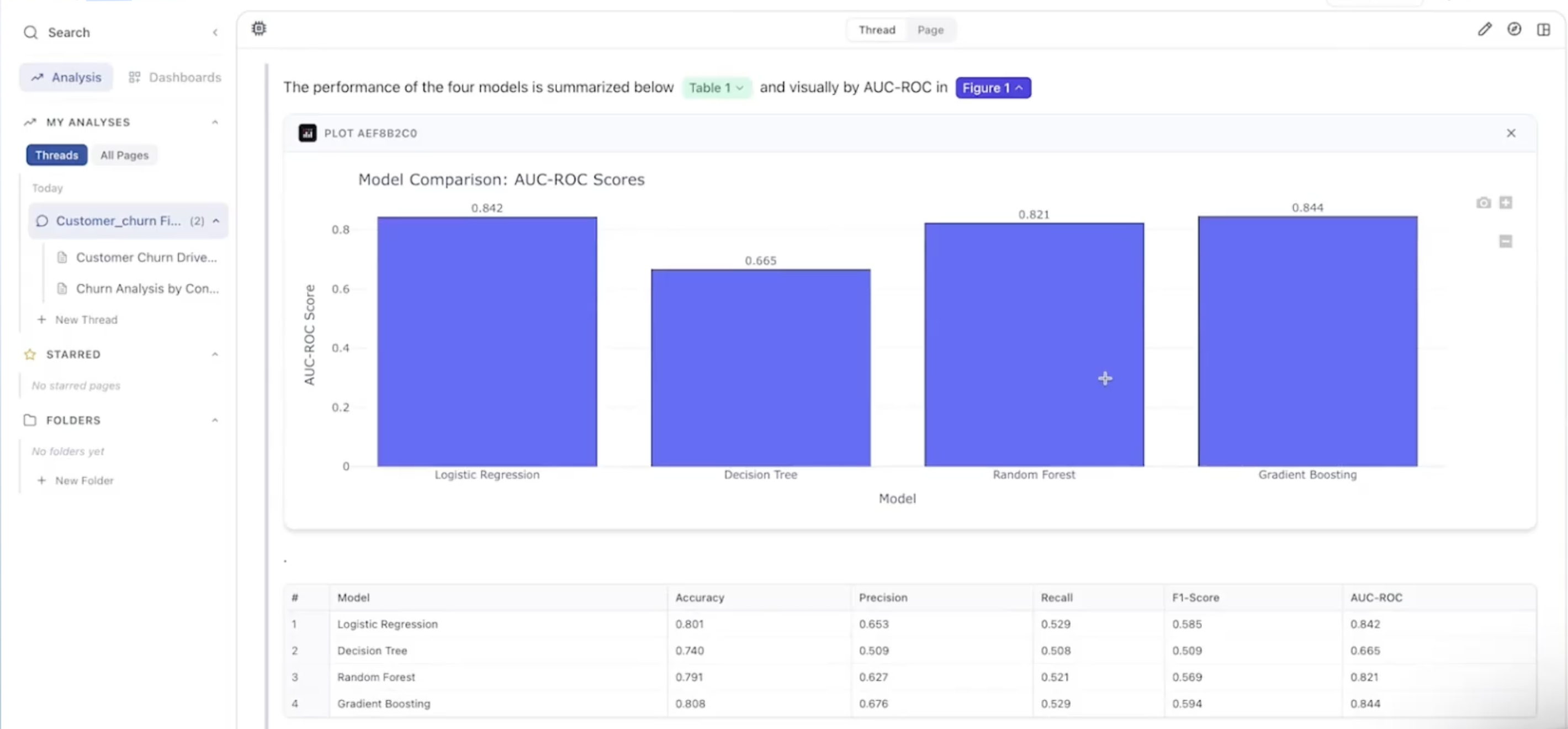

The same shift is happening with predictive modeling. The process of writing boilerplate code to split data, encode features, fit a model, tune hyperparameters, and evaluate performance — that process is automated. In Episode 1 of the “AI Agents Did the Whole Thing” series, I asked a single question — “what drives customer churn?” — and the AI agents built a full pipeline:

- Exploratory analysis

- Feature engineering (including creating a customer lifetime value column on their own)

- Model comparison across logistic regression and gradient boosting

- Feature importance ranking

All from one prompt.

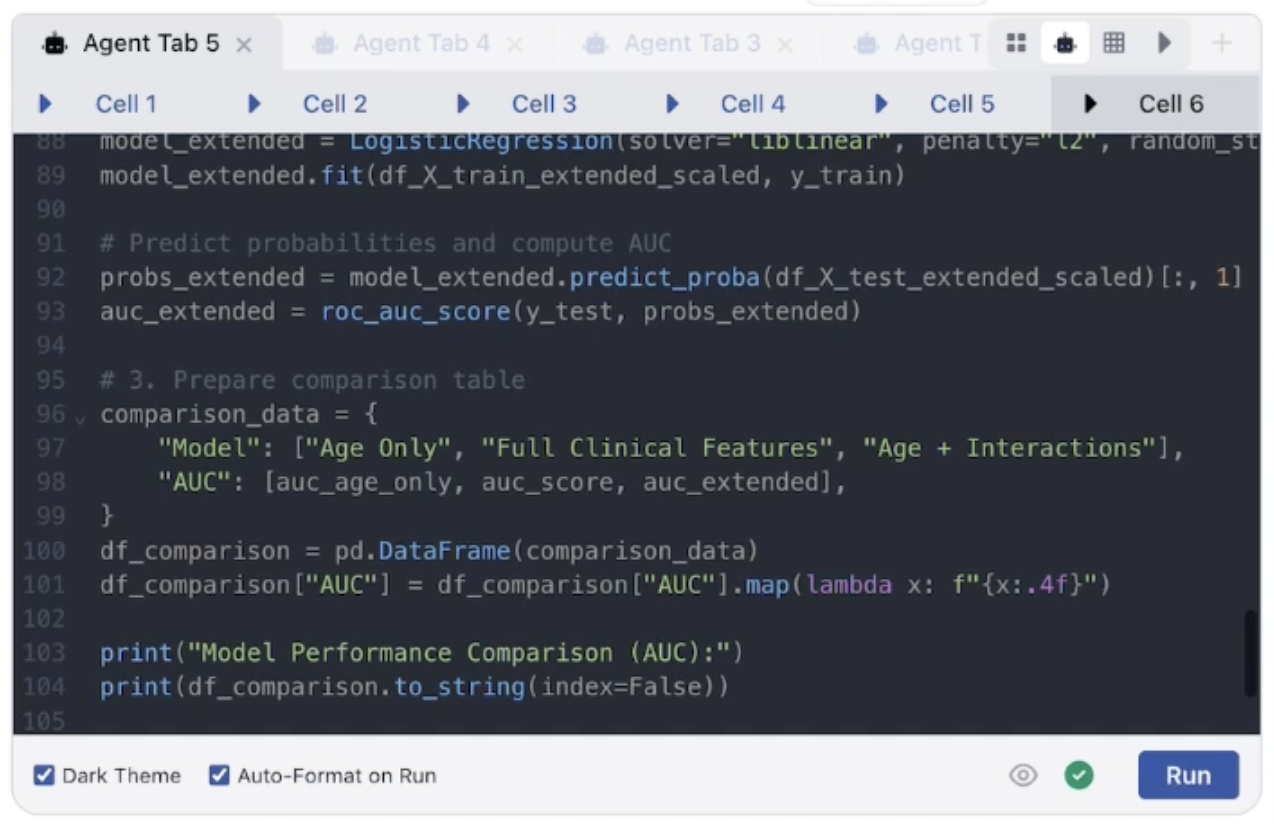

But here’s the nuance: you can even ask the AI to validate its own work. “Is there data leakage in this model?” “Do these features make sense?” “Is this the right statistical test for this distribution?” PlotStudio will answer those questions. So the technical validation is increasingly handled by AI too.

So where does the analyst actually come in?

At the decision boundary — where the data meets the real world. The AI lives inside the dataset. It can tell you the model is statistically sound, the features are significant, and the results are reproducible. What it can’t tell you is:

- This churn model is technically correct, but our CEO just signed a 3-year contract with this segment — so the finding is irrelevant right now

- These features are statistically significant, but we can’t act on them because we don’t control that variable

- This result contradicts what the sales team reported last quarter — someone is wrong and we need to figure out who before we present this

- This is a real finding, but if I present it in this context it’ll derail the board meeting — I need to frame it differently

The analyst’s value is knowing the world outside the dataset. The business context, the stakeholder dynamics, the constraints that never appear in a CSV. The AI tells you what the data says. The analyst decides what to do about it.

AI lives inside the dataset. The analyst lives at the boundary between the data and the business. That boundary is where decisions get made — and no model can navigate it for you.

What Skills Do Data Analysts Need in the AI Era?

As the mechanical work gets automated, two human skills become the core of the analyst’s job — and neither of them can be replicated by AI.

Quality Assurance

Verify assumptions, check for data leakage, validate statistical tests, and question every output before it reaches a stakeholder

Stakeholder Communication

Defend your findings, translate results into business impact, and sell the "so what" to people who don’t speak data

Quality Assurance

If you can’t defend the methodology, the analysis is worthless.

Quality assurance has always been part of the analyst’s job. But when AI is generating the analysis — writing the code, picking the statistical tests, engineering the features — QA becomes the primary job, not a side task.

This means: verifying assumptions before they propagate through an analysis. Checking for data leakage that would inflate model performance. Validating that the statistical test the AI chose actually applies to the data distribution you’re working with. Questioning whether the AI’s feature engineering introduced bias.

Every output — whether from AI, a colleague, or yourself — should be treated as a hypothesis until verified.

The analyst who catches the flawed assumption before it reaches a stakeholder is the one who earns trust.

And here’s the thing — this isn’t new. In seasoned analytics teams, this is already how it works. If an analyst reaches a conclusion, another analyst on the team replicates it independently before it goes anywhere near a stakeholder. No questions asked. That’s not bureaucracy — it’s the standard. You wouldn’t publish a research paper without peer review. You wouldn’t ship code without a code review. Analysis that drives business decisions deserves the same rigor.

AI doesn’t change this. It just changes who did the first pass. Instead of QA-ing a colleague’s work, you’re QA-ing an AI agent’s work. The process is the same: check the methodology, verify the assumptions, confirm the output makes sense. What changes is that QA moves from something senior analysts do occasionally to something every analyst does constantly. It becomes the primary skill, not the final step.

You wouldn’t publish a research paper without peer review. You wouldn’t ship code without a code review. Analysis that drives business decisions deserves the same rigor — whether a human or an AI did the first pass.

Stakeholder Communication and Data Storytelling

Your analysis drives decisions. A finding that never gets communicated, or gets communicated poorly, might as well not exist.

Data storytelling is becoming the defining differentiator between analysts who advance and analysts who plateau. When AI handles the mechanical work, what separates you is your ability to:

- Defend your findings — explain why the methodology is sound, why the data supports the conclusion, why alternative interpretations were considered and ruled out

- Translate results into business impact — “churn is 42% in month-to-month contracts” is a number. “We’re losing $2.1M annually from a segment we could retain with a 12-month incentive” is a decision-ready insight

- Sell the “so what” to people who don’t speak data — executives, product managers, marketing teams. If they don’t act on your analysis, the analysis didn’t matter

This is where tools like PlotStudio AI are already shifting the bar. PlotStudio doesn’t just produce charts and tables — it generates answers the way a data analyst would, with the “so what” baked into every output. The narrative tells you what the numbers mean, why they matter, and what to do about them. That’s a head start on the communication piece. But it’s still on you to verify the interpretation, contextualize it for your specific audience, and present it with conviction.

The analyst who can present a finding and get a decision made is 10x more valuable than the one who can build a model.

What Is Agentic Analytics and How Does It Work?

To understand why this shift is happening now, you need to understand what makes agentic analytics different from the AI-powered analytics tools that came before it.

Traditional Analytics vs. Augmented Analytics vs. Agentic Analytics

| Traditional | Augmented | Agentic | |

|---|---|---|---|

| Who does the work | Human writes all the code | AI assists the human | AI agents execute autonomously |

| Planning | Analyst plans manually | Analyst plans with AI suggestions | AI creates a structured analysis plan |

| Execution | Analyst writes and runs code | AI autocompletes code | AI writes, runs, and debugs code |

| Error recovery | Analyst debugs manually | AI suggests fixes | AI retries and self-corrects automatically |

| Output | Raw charts and tables | Charts with AI commentary | Full narrative report with charts, stats, and interpretation |

| Cross-analysis | Analyst synthesizes in their head | None | AI synthesizes findings across multiple experiments |

| Data profiling | Manual EDA with pandas | Basic automated stats | AI-powered 4-stage profiling on upload |

The key difference: augmented analytics helps you do the work faster. Agentic analytics does the work, and you verify it. The human role shifts from executor to director and quality controller.

Augmented analytics is a faster bicycle. Agentic analytics is a self-driving car. You still decide where to go — but you’re no longer pedaling.

The Paradigm Shift

This change in how analysis gets done enables four things that weren’t possible before:

Speed

Multiple analyses in minutes instead of hours or days

Volume

Run 10x the experiments — explore angles you’d skip under time pressure

Depth

AI finds what you weren’t looking for — no blind spots from domain familiarity

Focus

Spend time on QA, interpretation, and communication — not the mechanical parts

Speed — The time between “I have a question about this data” and “here’s a thorough, multi-step answer with charts, statistics, and interpretation” collapses. In my YouTube series, full churn analyses that would take an analyst a day were completed in minutes.

Volume — When each analysis costs minutes instead of hours, you run 10x the experiments. More experiments means more signal. Patterns that would have stayed hidden because you only had time for three analyses now surface because you ran twenty.

Depth — AI doesn’t have blind spots from domain familiarity. When you’ve worked in a domain for years, you develop intuitions — and those intuitions create shortcuts. You “know” the answer before you check. AI doesn’t do that. It explores angles and relationships in the data that you might overlook. It doesn’t cut corners under a deadline. This is one of the most underappreciated benefits: AI finds what you weren’t looking for.

Focus — Analysts spend time on the work that actually matters: QA, interpretation, communication, and decision intelligence — instead of the mechanical parts. The bottleneck shifts from “can we run this analysis?” to “what should we do with this finding?” That’s a much more valuable bottleneck to have.

People often say the analyst’s edge is “knowing what questions to ask.” But agentic analytics is already closing that gap too. PlotStudio AI, for example, generates a tailored research agenda the moment you upload a dataset — dozens of high-impact questions specific to your data’s columns, distributions, and patterns. After every analysis, it suggests follow-up questions based on what was just discovered. The AI is getting good at knowing what to ask.

What it can’t do is know what to do with the answers.

Which finding is worth escalating and which one gets filed away? What decision does this insight actually support? What action should someone take because of this result? Is this finding even relevant to the problem we’re trying to solve, or is it a statistical curiosity that doesn’t matter? That judgment — prioritizing, contextualizing, and acting on findings — is where the human analyst earns their seat at the table.

The AI is getting good at knowing what to ask. What it can’t do is know what to do with the answers. That judgment is where the human analyst earns their seat at the table.

How Do You Validate AI-Generated Analysis?

Agentic analytics makes analysis faster, deeper, and more voluminous. But speed without rigor is dangerous. The faster you can generate analyses, the more important it becomes to verify them.

These are the skills that separate a data analyst from someone who just runs prompts:

Critical Thinking

Questioning assumptions and evaluating whether the right question was even asked

Analytical Skepticism

Default stance: "this might be wrong — prove it to me"

Data Literacy

Knowing what data can and can’t tell you — and explaining it in plain language

Due Diligence

Reading the code, checking the data, verifying the assumptions hold

Sanity Checking

Does this pass the smell test? Would a reasonable person believe this result?

Critical Thinking

Questioning assumptions, evaluating evidence, and reasoning carefully through conclusions. AI can run the analysis — it can’t tell you whether the question was the right one to ask in the first place.

Was the sample representative? Is the time period meaningful? Are we measuring the right thing? Are we conflating correlation with causation? These questions require understanding the business context, the data collection process, and the decision that will be made based on the results. No AI model has that context — and no amount of decision intelligence software replaces it.

Analytical Skepticism

The default stance should be: “this might be wrong — prove it to me.”

Every output — from AI, from a colleague, from yourself — should be treated as a hypothesis, not a fact. Statistical significance doesn’t mean business significance. A well-formatted chart with confident language can present a completely misleading conclusion.

The analyst who defaults to skepticism and works backward from “why might this be wrong?” catches errors that the analyst who defaults to acceptance misses entirely.

Data Literacy

Understanding what data can and can’t tell you — and communicating that to others.

Knowing the difference between correlation and causation isn’t an academic exercise anymore. It’s the job. When AI produces a finding that “X is correlated with Y,” the analyst needs to know whether that relationship is causal, spurious, or confounded — and needs to explain that distinction in plain language to a stakeholder who wants a simple answer.

Being able to explain limitations, confidence levels, and uncertainty in plain language is a skill that will define the next generation of analysts.

Due Diligence

Verifying results before acting on them or handing them off.

This means reading the code the AI wrote. Checking that the data used was the right data. Checking that the assumptions built into the analysis hold. Checking that the cleaning steps didn’t distort the signal. Confirming that the model evaluation wasn’t inflated by data leakage.

“Trust but verify” becomes the operating model. You don’t need to rewrite the code from scratch — but you need to read it, understand it, and confirm it does what it claims to do. This is why no-code analytics tools that hide the code entirely are dangerous for serious work. You need transparency.

Sanity Checking

Does this pass the smell test? Would a reasonable person believe this result?

AI can produce outputs that are statistically valid but practically absurd. A model that predicts churn with 99% accuracy because it inadvertently used a post-hoc variable. A revenue forecast that projects 400% growth because it extrapolated from a seasonal spike. A correlation that is mathematically real but meaningfully irrelevant.

The human in the loop catches what the math can’t. You bring common sense, business context, and the ability to ask “does this actually make sense?” That’s not a skill AI is close to replicating.

AI can produce statistically valid but practically absurd results. The human in the loop catches what the math can’t — common sense, business context, and the question “does this actually make sense?”

Can AI Replace Data Analysts by 2030?

No. But the role will look fundamentally different.

Today, the workflow is: AI runs the analysis, humans validate it. Tomorrow, AI will also be able to validate analysis — AI agents that run their own QA checks, flagging suspicious results, testing for data leakage, verifying that statistical test assumptions hold, and checking for reproducibility.

We’re already seeing early versions of this in multi-agent architectures where one AI generates the analysis and a separate AI evaluates it. This is how agentic analytics platforms handle error recovery now — and validation is the natural next step.

But even in that future, a human in the loop remains essential. Here’s why:

AI can check methodology. It can verify that the code runs correctly, that the test is appropriate, that the results are reproducible. What it can’t do is judge whether a finding matters. Whether the stakeholder will act on it. Whether the data represents reality or just what was convenient to collect. Whether the recommendation makes sense given the company’s strategy, culture, and constraints.

PlotStudio AI is already moving in this direction — down the line, it will be able to run its own quality assurance checks on the analyses it produces. But even when that happens, as an analyst you are responsible for your clients. You must do your part.

Think of it like medicine. AI diagnostic tools can now detect certain conditions with remarkable accuracy. But you wouldn’t accept an AI diagnosis without a doctor reviewing it — even if the AI is correct. That review isn’t optional. It’s the minimum responsibility a professional has. The same applies to data analysis. Your stakeholders are making decisions based on your output. The fact that an AI generated it doesn’t absolve you of the responsibility to verify it. That’s not a limitation of the technology. That’s professional accountability.

Full automation of analysis and validation is coming. Full automation of judgment is not. The doctor still reviews the scan. The analyst still reviews the output.

The human role shifts from “did the code run correctly?” to “should we trust this enough to make a decision?”

Full automation of analysis and validation is coming. Full automation of judgment is not.

The Bureau of Labor Statistics projects data analyst roles to grow 36% between 2023 and 2033 — one of the fastest growth rates across all occupations. Gartner predicts that by 2026, 75% of new data integration flows will be created by non-technical users. The demand isn’t shrinking. It’s shifting. The analysts who adapt — who learn to direct AI, validate its output, and communicate its findings — will be more in demand than ever.

Is Data Analytics Still a Good Career in 2026?

Yes — but the definition of the job is changing.

If your value proposition is “I can write Python and build charts” — that value is shrinking fast. Not because those skills are useless, but because AI does them in seconds. They become table stakes, not differentiators.

If your value proposition is “I can tell you whether this analysis is trustworthy and what to do about it” — you’re more valuable than ever. That skill set — critical thinking, skepticism, communication, domain knowledge — is exactly what AI can’t replicate and what businesses desperately need as AI-generated analyses proliferate.

The analysts who thrive will be the ones who treat AI as a junior analyst on their team. Fast. Tireless. Capable of impressive work. But needs supervision. Needs direction. Needs someone who knows what the right questions are, who can spot when the output doesn’t pass the smell test, and who can turn a finding into a decision.

Learn to direct AI, not compete with it.

This is exactly what I demonstrate in the “AI Agents Did the Whole Thing” YouTube series — real datasets, real analysis, showing what the AI does well and where the human analyst is still essential.

The Bottom Line

Agentic analytics doesn’t replace analysts. It removes the bottleneck so you can spend your time where it counts.

The mechanical work is going away. The thinking work is becoming the whole job. The global data analytics market is projected to grow from $7 billion in 2023 to over $300 billion by 2030. The future of data analytics isn’t fewer analysts — it’s analysts who do fundamentally different, more valuable work.

The future belongs to analysts who can think critically, communicate clearly, and know when to trust the output and when to question it. If that’s you, your career isn’t threatened by agentic analytics. It’s accelerated by it.

Want to see this paradigm shift in practice?

Upload your own data and see what agentic analytics actually feels like.

Try PlotStudio AI FreeFollow our YouTube playlist where we release a new dataset analyzed entirely by AI every week.

Watch the Playlist